Tags

(Warning for the tragically delicate: “adult” content)

Yeah, so, I fucking told you so. The professional, “adult” way of saying that is something like, “events have evolved along the patterns we previously anticipated”, but I’m fucking done with being an “adult”. I told you that Trump was a fucking fascist; I told you more than once. Ironically enough (assuming I’m using the term “ironically” correctly, which assumption may itself be a bit ironic) Robert O. Paxton, the scholar upon whose work I lean most heavily for my understanding of fascism, was quite slow in acknowledging the fact that Trump is a fascist. The last straw for Paxton was Trump’s ginning up the mob on January 6th in his (previous) last ditch attempt to overthrow the government. It was the invocation of the mob that turned Paxton’s opinion, but Paxton is (I am arguing) in error on this matter. J6 was the time Jabba-the-Trump succeeded in ginning up the mob, it is scarcely the first or only time he made such an attempt or appeal. Trump was and is constantly calling for mob action, whether in the form of beating up someone he dislikes, or more general intimidation tactics. Stochastic terrorism is Jabba’s bread-and-butter. J6 stands out not because it was the first time Jabba made such a call; it stands out because it was the time the mob actually turned out en masse. And as Paxton himself astutely notes, it is not success that makes a fascist movement fascist, it is the fascism itself; the intent, not the achievement.

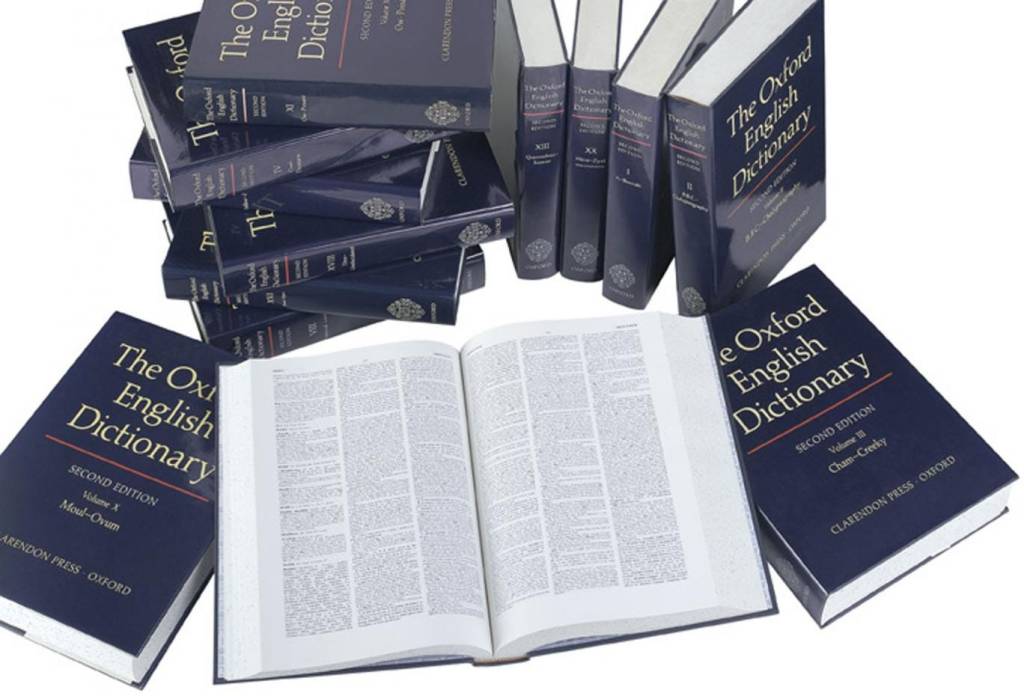

Realistically, Paxton’s hesitancy is quite understandable and worthy of respect. “Fascism” is one of the most promiscuously abused terms out there, second only to “communism” and “scientific.” So while I do contend that his reluctance was misplaced, I nevertheless recognize that it was misplaced for reasons on intellectual integrity. The fact that even the most cautious expert in the field has now (however reluctantly) acknowledged what I insisted was the case almost 10 years ago is enough for me to take a victory lap, declaring I was right all along (regardless of whether it was only a sadly accurate guess at the time.)

A reminder for those who don’t want to go back and read those earlier posts, fascism is:

… a form of political behavior marked by obsessive preoccupation with community decline, humiliation or victimhood and by compensatory cults of unity, energy and purity, in which a mass-based party of committed nationalist militants, working in uneasy but effective collaboration with traditional elites, abandons democratic liberties and pursues with redemptive violence and without ethical or legal restraints goals of internal cleansing and external expansion.

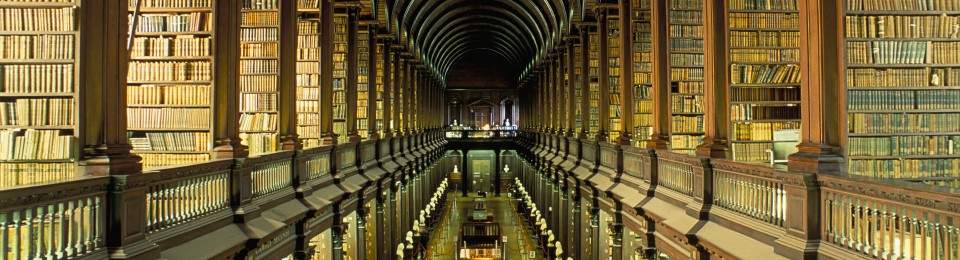

– Robert O. Paxton, Anatomy of Fascism [4267] Kindle edition.

It might be petty of me to note that this definition makes no mention of the invocation of mob action, but I am feeling petty (and bitter) so I’m going to pretend I didn’t say anything about it. What had been previously lacking in Jabba’s rhetoric was not the call for mob action, but rather the call for external expansion. That has now been radically changed with the Orange Shitgibbon’s demands to seize Canada, Panama, and Greenland.

Jabba is now assisted by the even more racist born-rich illiterate buffoon whose name I’ll not mention here. Let’s call him “Leon Cuck.” As the richest man in the world, Leon is the living demonstration that wealth is absolutely no indication of intelligence, integrity, or decency. For the last 8 or so weeks, President Leon and his neutered lapdog Donald have been destroying the framework of democracy in this country with promiscuous abandon, aided and abetted by members of the Republican party, everyone of whom has had to get reinforced kneepads sewn into their clothing for all the time they spend on them copping Dear Leader’s joint.

Leon’s shiny new disregard for any pretense of legality, the Department Of Unelected Cunts Harming Everything (“DOUCHE”) – enthusiastically manned by his pimple-popping brigade of cultish InCel blackshirts – is going well beyond any mere grab for, and exercise of, hegemonic domination. This is actually new in the history of fascist movements. In the past, fascist programs were aimed at unfettered power, without let or hindrance. But it was power over an intact social infrastructure. Yes, the fascists always aligned themselves with big money; yes, they always suppressed any manner of labor movement, unions, or worker advancement. But they didn’t set out to annihilate them either. Fascists set out to seize the government, not destroy it and all of the citizenry along with it. But that appears to be the path Leon is following.

Of course, Leon is also a fucking moron, and so he’s constantly being caught off guard by his own incompetence. Keep in mind that this individual is nothing more than a born-rich grease-stain like his new best buddy Jabba, a “man” – a term that must be used advisedly – who has never actually created or built anything of his own, but who has only ever leveraged his inherited wealth into taking over other people’s creations and then squeezing more wealth out of those things. Along with Jabba, stripped of his inherited money he is nothing more than a low-rent school yard bully. So it is unclear what he expects to accomplish by destroying the very possibility of economic production, especially since the effects of his actions will ramify around the globe.

This might be a good time to interject a fairly obvious point: Leon is a billionaire many hundreds of times over, yet it is not possible – not even in imagination, never mind reality – to earn a billion dollars. The only way a person can acquire that kind of obscene wealth is through theft, fraud, &/or extortion. (Or maybe marrying and then divorcing someone who has acquired that kind of money.)

Under ordinary circumstances – or even just slightly less outrageously extraordinary ones – we in the US could take some small comfort in the thought that the electoral massacre the Republicans would suffer in the 2026 midterms would finally put a check on Jabba’s and Leon’s rampage. But they have largely achieved the hegemonic domination fascists dream of. Even as a few courts get all pouty-faced and call them naughty boys, there is no agency capable of enforcing any judicial ruling against them. If they back down on any issue, it is purely because the choose to do so, as no one now can compel it of them. And those midterms, will there even be any?

At this point, I rather expect Jabba and Leon to continue to run roughshod over everyone and everything until they finally gin up large scale civil protests. At that point, Jabba calls out the military (the Secretary of Defense and Chairman of the Joint Chiefs are both kneelers) to start shooting people. He then declares a nationwide emergency and suspends the Constitution.

I would like to be proven wrong about this, but I’m beyond holding my breath for anything.